Rewriting Our Onboarding Email Campaign

Alfred Lua / Written on 26 August 2020

Hello there,

Since my last note, I have been preparing for an upcoming product launch, which will be happening next Wednesday. We will be announcing a feature called boosted post insights. It allows our customers to see data of their boosted Facebook and Instagram posts in a single tool, easily compare paid vs organic results of their boosted posts, and add this new data to their social media reports. If you do catch the launch, I'd love to hear what you think!

On a related note, in case you missed it, I wrote about rethinking product launches last week. A reader kindly shared that it was "super helpful!"

For a few weeks, I mentioned I have been working on a new onboarding email campaign. It is almost ready to be shipped, and while things are still fresh in my mind, I wanted to write them down and share them with you. (It'll serve as a good record for me, too.)

Why rewrite the onboarding email campaign?

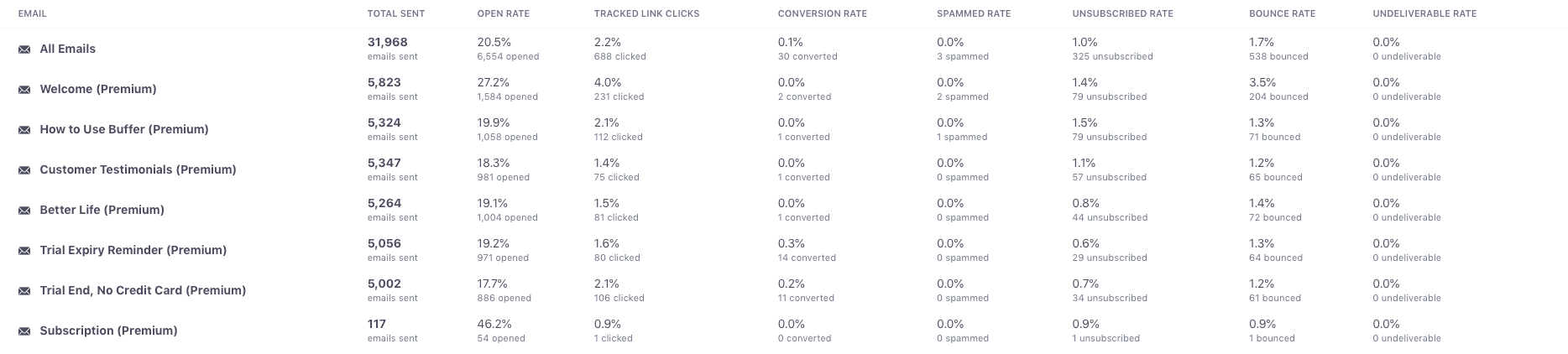

This trial onboarding email campaign is for, what we now call, Buffer. This trial allows people to try everything Buffer has to offer, both publishing and analytics solutions. I had written the old email campaign about six months ago. It is relatively simple but thorough. There is a good mix of product tutorials, social proof, persuasion, and trial expiry reminders. (Here's a good teardown of it.) The open and click rates are averaging around 20.5 percent and 2.2 percent. While these numbers aren't great, improving them is not the reason I rewrote the email campaign.

The main reason is that our product has evolved. Back then, we had a multi-product strategy, where we had separate products. A publishing product and an analytics product. We have shifted away from the multi-product strategy and are combining the products into one. Buffer is essentially a single product with multiple solutions. But in the old email campaign, I wrote of Buffer as two separate products, Publish and Analyze. I wanted to update the language accordingly. Also, we have introduced new features in the last six months—the most significant being Campaigns, which allows customers to create multi-channel social media campaigns and automatically get a campaign report. Because it is one of the most significant features in Buffer now, I wanted to make sure people know about it during their trial. Finally, one last point about our product, we discovered from our data that people haven't been using the analytics as much as the publishing features. Yet we found that people who do are more likely to subscribe to Buffer and subscribe for a longer time. I wanted to put more emphasis on our analytics in the email campaign.

The main reason is that our product has evolved. Back then, we had a multi-product strategy, where we had separate products. A publishing product and an analytics product. We have shifted away from the multi-product strategy and are combining the products into one. Buffer is essentially a single product with multiple solutions. But in the old email campaign, I wrote of Buffer as two separate products, Publish and Analyze. I wanted to update the language accordingly. Also, we have introduced new features in the last six months—the most significant being Campaigns, which allows customers to create multi-channel social media campaigns and automatically get a campaign report. Because it is one of the most significant features in Buffer now, I wanted to make sure people know about it during their trial. Finally, one last point about our product, we discovered from our data that people haven't been using the analytics as much as the publishing features. Yet we found that people who do are more likely to subscribe to Buffer and subscribe for a longer time. I wanted to put more emphasis on our analytics in the email campaign.

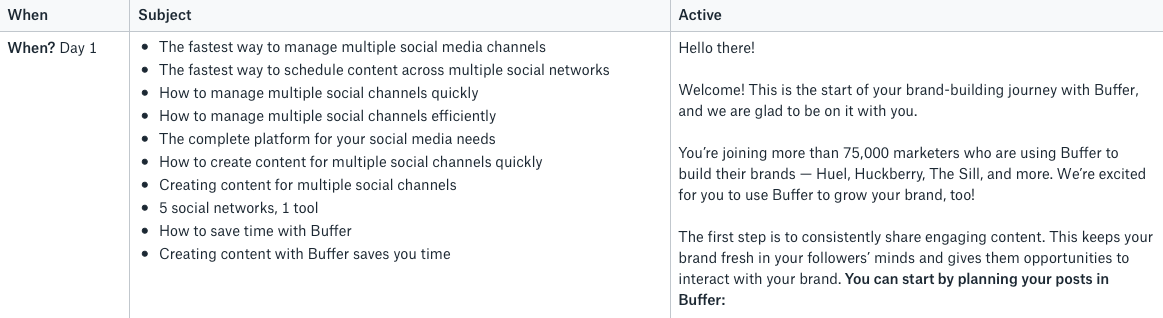

Since I'm updating the email campaign, I also wanted to change two things about the old email campaign. First, I didn't include any product images. The emails are all text. I wanted to take this opportunity to add some product screenshots because I think that can better educate trialists about Buffer. Second, I want to write new subject lines to see if I can improve the open rates.

Should I be making so many changes at once? How would I know what caused an improvement in the metrics? Or worse, a fall in the numbers? Some email marketers will disagree with me but I think this is fine. I'm updating the email campaign mainly because the product has changed, not to optimize the numbers. The ultimate measurement of email onboarding is whether it's helping trialists understand how to succeed with Buffer and making more trialists subscribe to Buffer. It's hard to accurately measure the latter but the former can be easily deduced. Our email campaign is outdated and isn't teaching trialists the best way to use Buffer. Even if the open and click rates are high, it's useless.

How I think about the email campaign

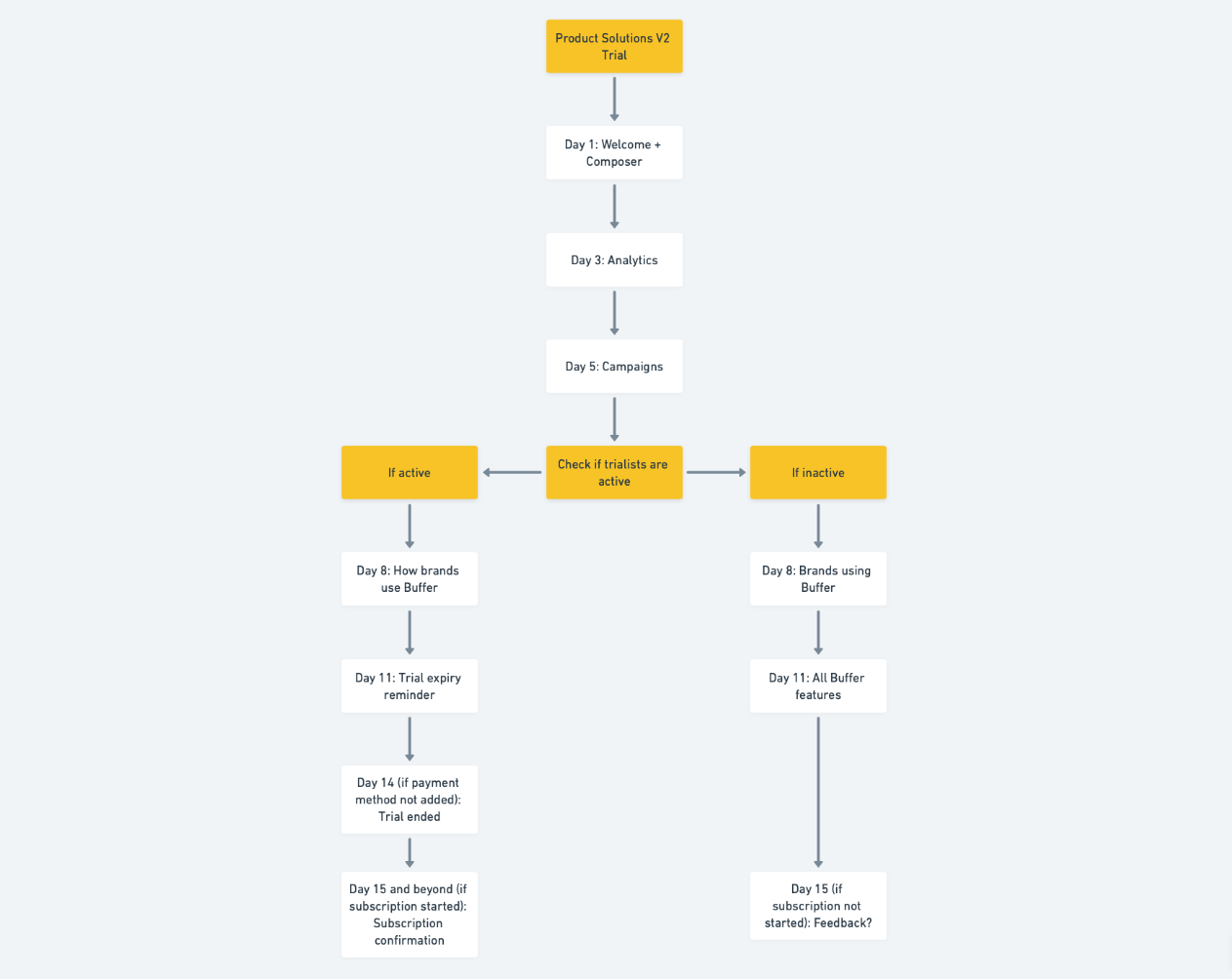

The first thing I did was to rethink the entire flow of the email campaign.

The old campaign is a straightforward time-based onboarding campaign. Trialists get emails on days 1, 3, 5, 8, 11, and 14 of their trial. They get the same emails regardless of what they do, or don't do, in the product.

One thing I kept reading and hearing from teammates is to include more behavior-triggered emails. Specifically, if trialists are not doing the specific thing that would help them understand the product, nudge them a few times. While I kind of agree with the concept, I have a few hesitations.

First, we had identified that scheduling a post in Buffer is an important step in understanding the value of the product. But I'm not sure emailing trialists to do that if they haven't is right. Just because I email them to schedule a post doesn't mean they would. I think the best place and time to teach people how to schedule a post should be inside the product when they first sign up and have the highest motivation to try the product. Because Buffer is mostly known to be a social media scheduling tool, it's very unlikely people who sign up for a trial don't know they should schedule a post. There must be reasons why they signed up for Buffer and not use it. Here's the list I came up with:

- They are researching multiple tools at the same time and didn't like Buffer.

- They don't understand the value of Buffer.

- They don't know how to use Buffer.

- They think Buffer isn't suitable for them.

- They think Buffer doesn't have the feature they need.

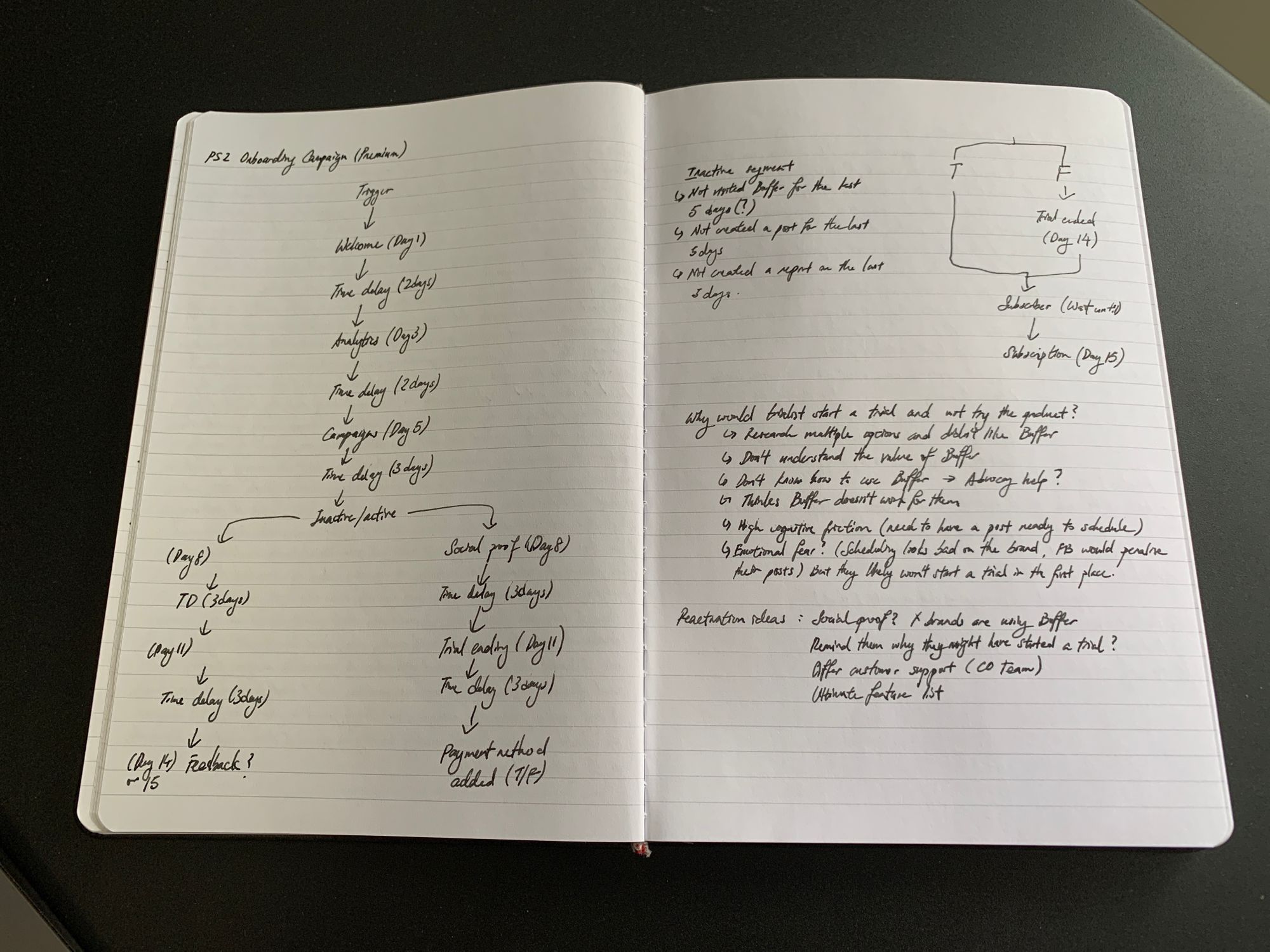

To deal with these, I decided to create a new section for the email campaign—inactive users will receive a few different emails that will address these. The goal is to re-engage them with these emails. Active users will, on the other hand, receive emails that encourage them to subscribe because they are actively using the product.

Second, our trial is only 14 days and I don't want to email our trialists too often. I think an email every two to three days is reasonable. This means I can have about five to six emails in the campaign. I don't want to "use up" these emails to remind trialists to schedule posts in Buffer. Yes, scheduling posts in Buffer is an important step for trialists to understand the product but there are other things to talk about to persuade them to use Buffer, such as addressing the concerns they have about the product, as mentioned above. So I added these three emails for inactive users:

- A list of relatively well-known brands that are using Buffer to let them know Buffer suits many small businesses, likely theirs, too.

- A list of our key features plus an offer to answer questions about our product, in case they misunderstood what Buffer can do.

- A simple question asking for their feedback

How I wrote and set up the email campaign

Once I thought through the flow of the email campaign, I drew a flowchart of the email campaign in my notebook. It is a rough sketch to help my brain visualize the emails trialists will get during their trial.

After drawing the flow chart, I wrote the copy of each email in Dropbox Paper and wrote 10 subject lines for each email. I got this habit from writing 25 headlines for my blog posts when I was a content marketer. I believe I would come up with better copy when I force myself to write down more subject lines or headlines.

I then asked a fellow product marketer and our customer onboarding team, who responds to any replies to these emails, for their feedback.

I then asked a fellow product marketer and our customer onboarding team, who responds to any replies to these emails, for their feedback.

The email tool we are using is Customer.io. We used to hardcode all our onboarding emails and it was very hard to make changes to the emails because we needed engineers to help make changes. After migrating to Customer.io, we have been able to change email campaigns and set up new email campaigns ourselves. (This was also possible because we also got Segment and tidied up our data architecture.)

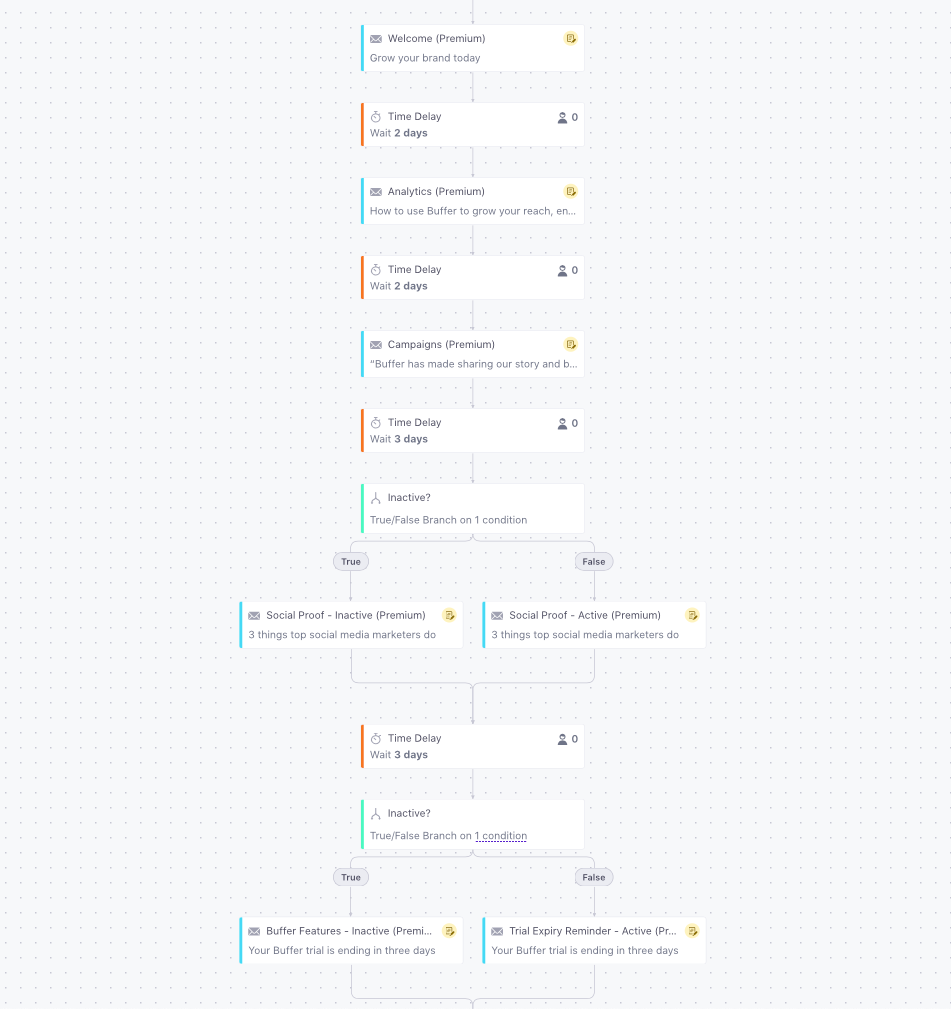

In Customer.io, email campaigns are visualized as a flow chart, which makes it easy for me to understand the flow, just like how I sketched the flow chart above.

Once people start a trial, they will enter this campaign and flow through it according to the time delays (e.g. wait 2 days) and conditions (e.g. are they active?) If they cancel their trial midway, they will stop receiving the remaining emails because I assume they are no longer interested in Buffer.

Once people start a trial, they will enter this campaign and flow through it according to the time delays (e.g. wait 2 days) and conditions (e.g. are they active?) If they cancel their trial midway, they will stop receiving the remaining emails because I assume they are no longer interested in Buffer.

The switch

After I include any feedback from my colleague, I'll switch to this new email campaign over a weekend because we get much fewer trials started on the weekends and this will minimize the number of people who might be affected by the switch.

I'm planning to compare the open and click rates about a month after the switch to see if they have improved or if I'd need to make some improvements. Customer.io also allows us to track goals, which I have set as becoming a paying subscriber. I will also monitor that but because too many factors can affect that, especially product changes, I wouldn't make changes solely based on that.

Do you have any questions about this? Let me know. I'm happy to share more.